Vision | STM32Cube.AI | STM32 AI MCU | Partner | Video

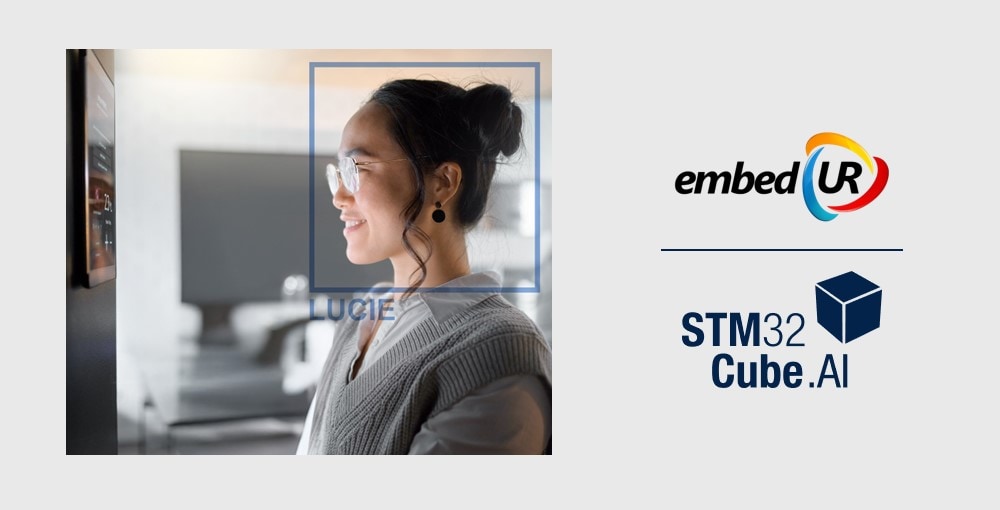

How to personalize smart home with familiar face identification

embedUR's on-device face authentication embedded on STM32N6 with easy mobile enrollment

Discover how to improve embedded solutions using edge AI.

Vision | STM32Cube.AI | STM32 AI MCU | Partner | Video

embedUR's on-device face authentication embedded on STM32N6 with easy mobile enrollment

Vision | STM32Cube.AI | STM32 AI MCU | Partner | Smart home

All‑in‑one STM32N6‑based platform for on‑device edge AI with NPU acceleration

Industrial | Smart city | Vision | STM32Cube.AI | STM32 AI MCU

Edge processing with RGB camera and ToF sensor ensures rapid and secure anti-spoofing for access control using the STM32N6 MCU.

Vision | STM32Cube.AI | STM32 AI MCU | Video | Customer

Meta-Bounds elevates AR glasses with STM32N6 MCU edge AI and computer vision technology

Vision | STM32Cube.AI | STM32 AI MCU | Video | Customer

Prevent distracted driving with real-time edge AI camera technology powered by the STM32N6 microcontroller

Industrial | Partner | STM32 AI MCU | STM32 MCU | Vision

Protecting biodiversity with embedded computer vision for real-time detection and prevention

Industrial | Smart city | Vision | STM32Cube.AI | STM32 AI MCU

How does the STM32N6 improve real-time detection of people, cars, trucks, and cyclists in blind spots

STM32 MCU | NanoEdge AI Studio | Industrial | Accelerometer | Predictive maintenance

Predictive maintenance for forging presses using local vibration analysis and classification without latency or network dependency.

Customer | STM32 MCU | NanoEdge AI Studio | Industrial | Video

A sensor platform with an STM32 MCU that digitizes odors and complex gases for in-site detection

Industrial | Appliances | Smart city | Vision | STM32Cube.AI

Using STM32N6 MCU and Edge AI to detect and count products in real time — no cloud required.

Partner | Smart city | Transportation | Vision | STM32Cube.AI

Vision AI-powered solution for Automatic Number-Plate Recognition (ANPR) for smart city applications, running on STM32 MCUs

Entertainment | Image recognition | Vision | STM32Cube.AI | Demo

Track and analyze users' body movements to provide feedback on exercise with STM32N6 at 28 FPS.

Tutorial | Demo | MEMS MLC | Accelerometer | Industrial

Monitor and classify the behavior of a fan (e.g. on HVAC units) through the Machine Learning Core available in MEMS sensors.

Tutorial | Demo | MEMS MLC | Gyroscope | Accelerometer

Recognize head gestures such as nodding, shaking, and other general head movements through the Machine Learning Core available in MEMS sensors.

Object detection | Vision | STM32Cube.AI | Idea | GitHub

Detection of personal protective equipment on workers using an object detection AI model.

Predictive maintenance | Accelerometer | NanoEdge AI Studio | Video | Partner

Anomaly detection solution on industrial equipment, running on STM32 MCU.

Customer | STM32Cube.AI | Current sensor | Accelerometer | Predictive maintenance

Tire pressure monitoring solution to improve rider safety and convenience.

Human activity | MEMS Studio | GitHub | Demo | Accelerometer

Packages condition classification on sensors.

Human activity | MEMS Studio | GitHub | Demo | Accelerometer

Pose recognition and classification on a sensor.

Human activity | MEMS Studio | Accelerometer | GitHub | Demo

Activity recognition and classification on a sensor.

Context awareness | Gyroscope | NanoEdge AI Studio | Accelerometer | Predictive maintenance

Low-power anomaly detection solution running on a sensor.

Partner | STM32Cube.AI | Vision | Biometric | Smart home

End-to-end AI solution for face identification running on STM32 microcontrollers.

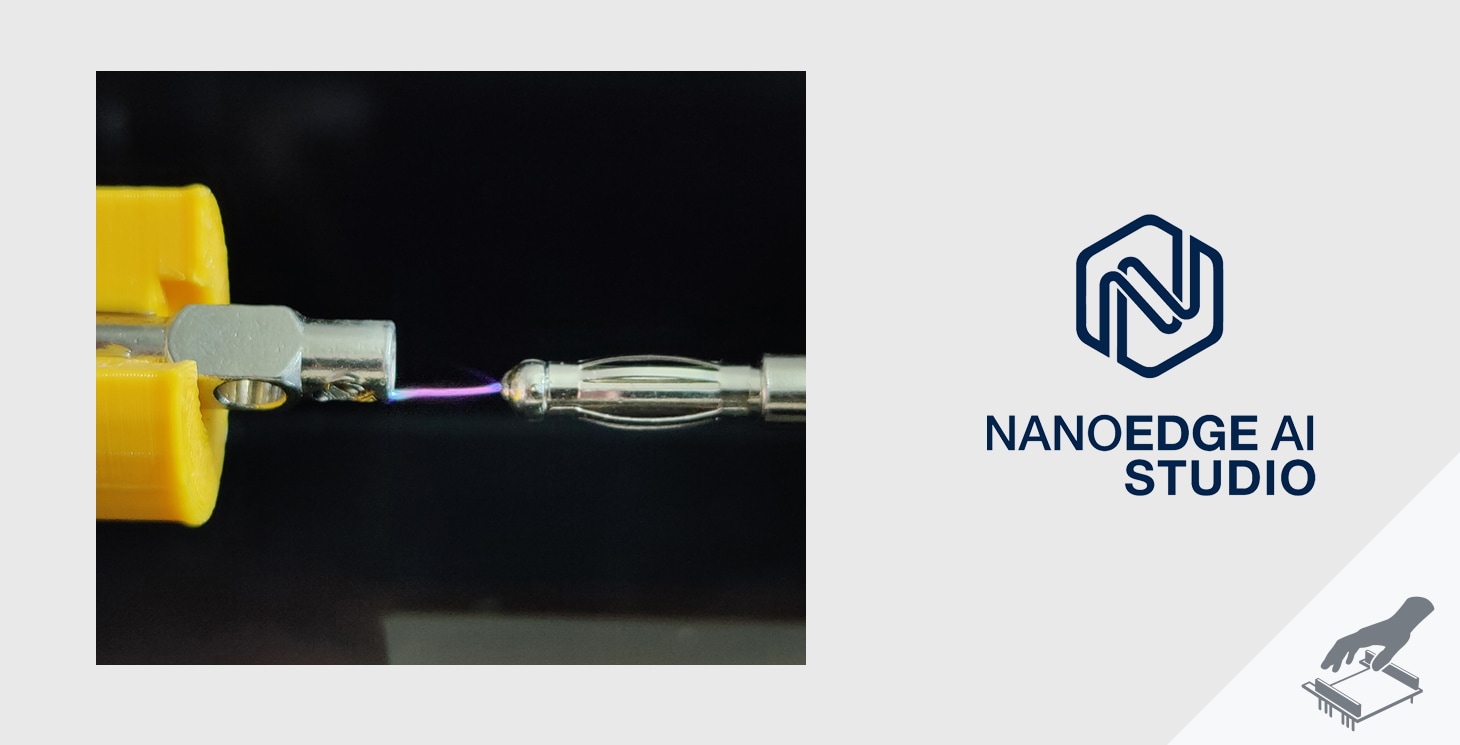

Industrial | Smart city | Predictive maintenance | Current sensor | NanoEdge AI Studio

Build your own arc fault detection mechanism with edge AI.

Entertainment | Human interface | Time of Flight | NanoEdge AI Studio | Human activity

Gestures classification on Arduino using a ToF sensor.

Smart city | Image classification | Vision | STM32Cube.AI | Idea

Classify traffic signs thanks to an image classification model running on a STM32H7 microcontroller.

Appliances | Agriculture | Image classification | Vision | STM32Cube.AI

Classify coffee beans thanks to an image classification model from STM32 Model Zoo running on a STM32H7 microcontroller.

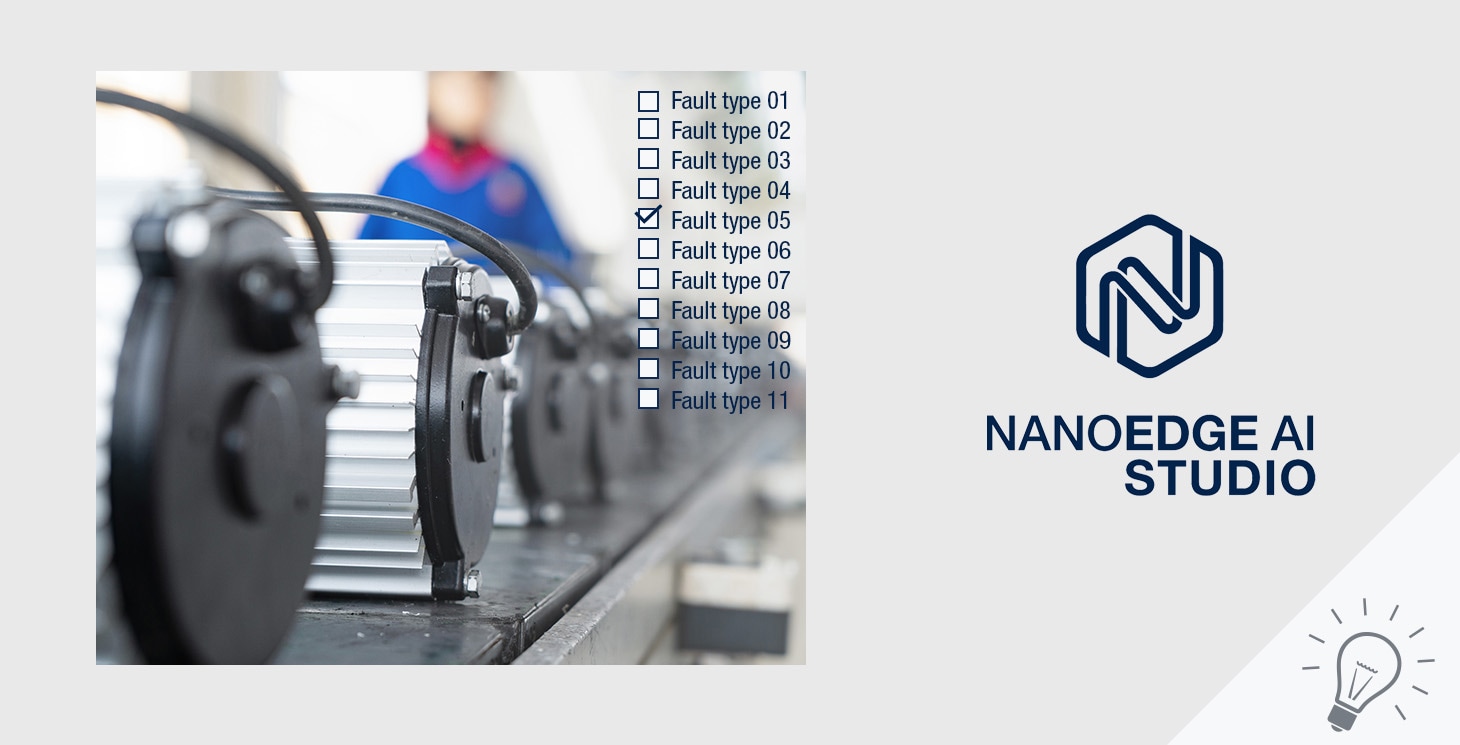

Industrial | Predictive maintenance | Current sensor | NanoEdge AI Studio | Accelerometer

Using AI to detect and diagnose faults in linear actuators.

Environment | Context awareness | Thermal sensor | NanoEdge AI Studio | Idea

Predict the next day's maximum temperature to better prepare for potential disasters.

Wearables | Entertainment | Human activity | Gyroscope | NanoEdge AI Studio

Using a smartphone to recognize human activities.

Industrial | Predictive maintenance | Current sensor | NanoEdge AI Studio | Idea

Detect and classify electrical anomalies in a power system.

Industrial | Predictive maintenance | Current sensor | NanoEdge AI Studio | Idea

Using AI to determine if an electrical grid is stable.

Industrial | Transportation | Predictive maintenance | Thermal sensor | NanoEdge AI Studio

Using AI to extrapolate torque and rotor temperature values to improve motor performance.

Agriculture | Predictive maintenance | Thermal sensor | NanoEdge AI Studio | Idea

Create an AI model that predicts the quality of processed food instead of measuring it.

Industrial | Transportation | Predictive maintenance | Accelerometer | NanoEdge AI Studio

Detection and classification of motor faults for predictive maintenance.

Appliances | Asset tracking | Current sensor | NanoEdge AI Studio | Demo

Using AI to make your home appliances “smarter” and more energy efficient for a sustainable future.

Appliances | Wearables | Entertainment | Smart home | Image classification

Handwriting recognition on ultra-low-power MCU.

Appliances | Entertainment | Smart building | Human interface | Time of Flight

Hand posture recognition running on STM32F401 based on ST multizone Time-of-Flight ranging sensor.

Environment | Agriculture | Image classification | Vision | STM32Cube.AI

Image classification on high-performance MCU. MobileNet 0.25 model from STM32 model zoo.

Environment | Agriculture | Image classification | Vision | STM32Cube.AI

Image classification on high-performance MCU. MobileNetV2 alpha 0.35 model from STM32 model zoo.

Industrial | Appliances | Predictive maintenance | Accelerometer | NanoEdge AI Studio

Smart sensor node over BLE connectivity to simplify the configuration and to be notified in case of detection via a mobile app.

Industrial | Appliances | Predictive maintenance | Accelerometer | NanoEdge AI Studio

Learn to detect abnormal behavior at the edge on a vibrating machine.

Smart home | Context awareness | Time of Flight | STM32Cube.AI | Video

Advanced solution for material recognition of floor type (hard or soft) enabled by AI technology.

Industrial | Smart city | Asset tracking | Vision | STM32Cube.AI

Equip meters with aftermarket wireless & low-power readers.

Industrial | Appliances | Predictive maintenance | Accelerometer | NanoEdge AI Studio

Learn to detect abnormal behavior at the edge on a vibrating machine.

Smart city | Smart home | Smart office | Context awareness | Vision

Optimized computer vision using an MPU running at 8 FPS.

Industrial | Smart building | Predictive maintenance | Gyroscope | NanoEdge AI Studio

Predictive maintenance solution for industrial equipment.

Entertainment | Context awareness | Accelerometer | NanoEdge AI Studio | Idea

Classification of the net vibrations with an accelerometer.

Industrial | Transportation | Appliances | Smart building | Smart office

Neural Network classification based on a high-frequency analog microphone pipeline.

Transportation | Predictive maintenance | Current sensor | NanoEdge AI Studio | Customer

Predictive maintenance on motors for automatic door motors.

Industrial | Appliances | Predictive maintenance | Current sensor | NanoEdge AI Studio

Current sensing to detect abnormal behaviors in motors.

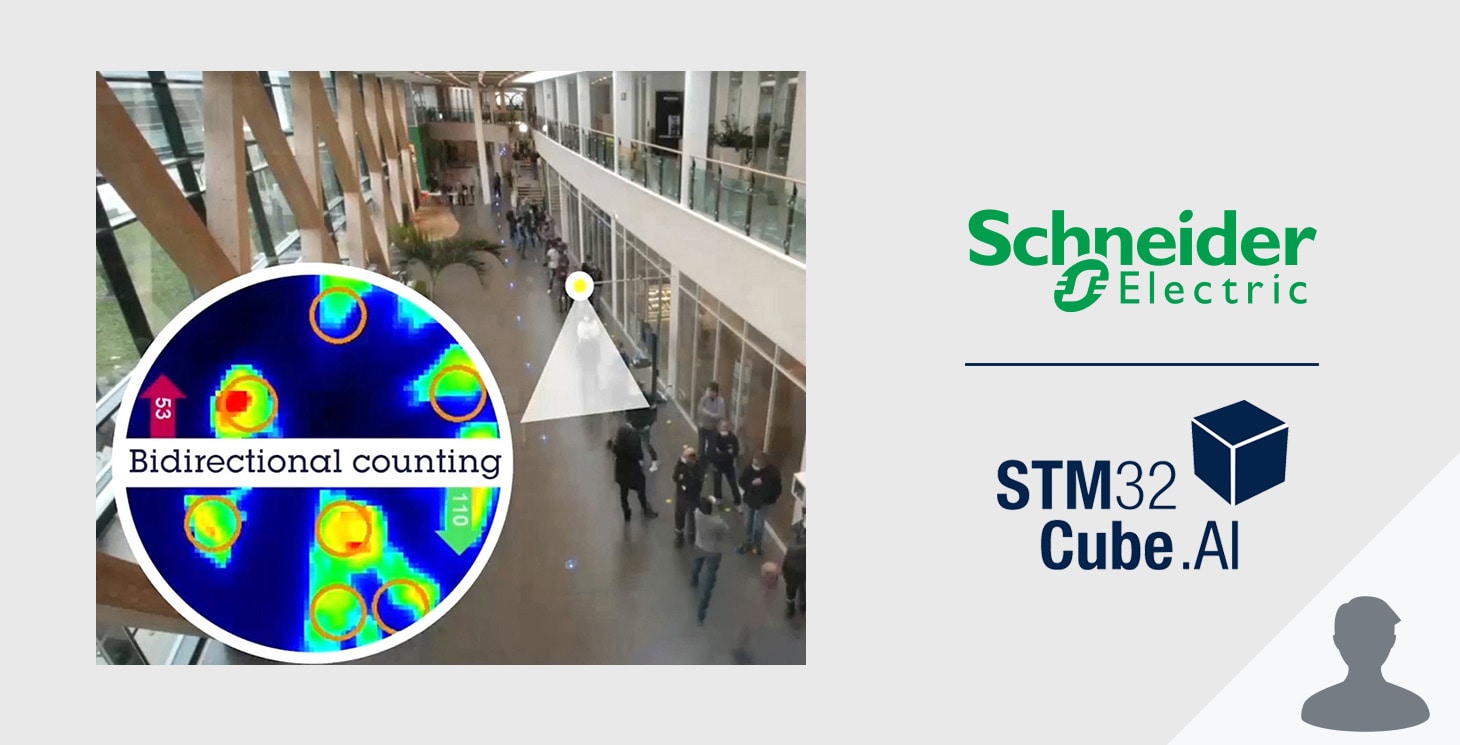

Smart building | Smart office | Object detection | Thermal sensor | STM32Cube.AI

An innovative approach to measure people flows using an in-house thermal sensor.

Appliances | Entertainment | Toys | Human activity | Time of Flight

Trigger actions on a PC using a Time-of-Flight sensor to classify hand movements. Recognition of 3 different classes.

Smart city | Smart home | Smart building | Smart office | Context awareness

Identify different environments (indoor, outdoor, in-car) using a simple microphone.

Industrial | Context awareness | Accelerometer | NanoEdge AI Studio | Predictive maintenance

Enabling optimized maintenance systems in ships.

Appliances | Smart home | Smart building | Biometric | Vision

Face identification on a high-performance MPU.

Industrial | Predictive maintenance | Gyroscope | NanoEdge AI Studio | Accelerometer

Predictive maintenance solution for industrial equipment.

Industrial | Transportation | Asset tracking | Current sensor | NanoEdge AI Studio

Classify data based on different types of faults in an electric drive.

Transportation | Smart home | Smart building | Object detection | Vision

People detection and counting on high-performance MCU.

Transportation | Smart city | Smart building | Smart office | Human interface

Count the number of people passing through a door using a Time-of-Flight sensor.

Industrial | Context awareness | Gas sensor | NanoEdge AI Studio | Predictive maintenance

Predictive maintenance applied to industrial ovens.

Wearables | Entertainment | Human activity | Accelerometer | STM32Cube.AI

Easily identify 5 different activities with a 3D accelerometer.

Transportation | Predictive maintenance | Accelerometer | NanoEdge AI Studio | Idea

Vibration analysis to detect an abnormal behavior on a gearbox.

Smart home | Smart building | Smart office | Image classification | Vision

Human detection on high-performance MCU.

Environment | Agriculture | Image classification | Vision | STM32Cube.AI

Image classification on high-perf MCU.

Appliances | Agriculture | Image classification | Vision | STM32Cube.AI

Image classification on high-performance MCU.

Transportation | Predictive maintenance | Accelerometer | NanoEdge AI Studio | Customer

On-track predictive maintenance.

Entertainment | Toys | Human activity | Accelerometer | NanoEdge AI Studio

Analysis of the vibrations produced by the instrument to detect the chord played.

Smart building | Predictive maintenance | Accelerometer | NanoEdge AI Studio | Customer

Adaptive water leakage detection solution.

Appliances | Entertainment | Toys | Human interface | Time of Flight

Implementation on low-power MCU without a camera.

Industrial | Predictive maintenance | Current sensor | NanoEdge AI Studio | Accelerometer

Predictive maintenance on high-tech industrial tools.